By comparison, estimates from Epoch AI suggest the United States accounts for about 70 percent of global AI compute, while China holds roughly 15 percent, a gap of about fivefold. RAND notes that the U.S. advantage in high-performance GPU clusters could be as much as tenfold.

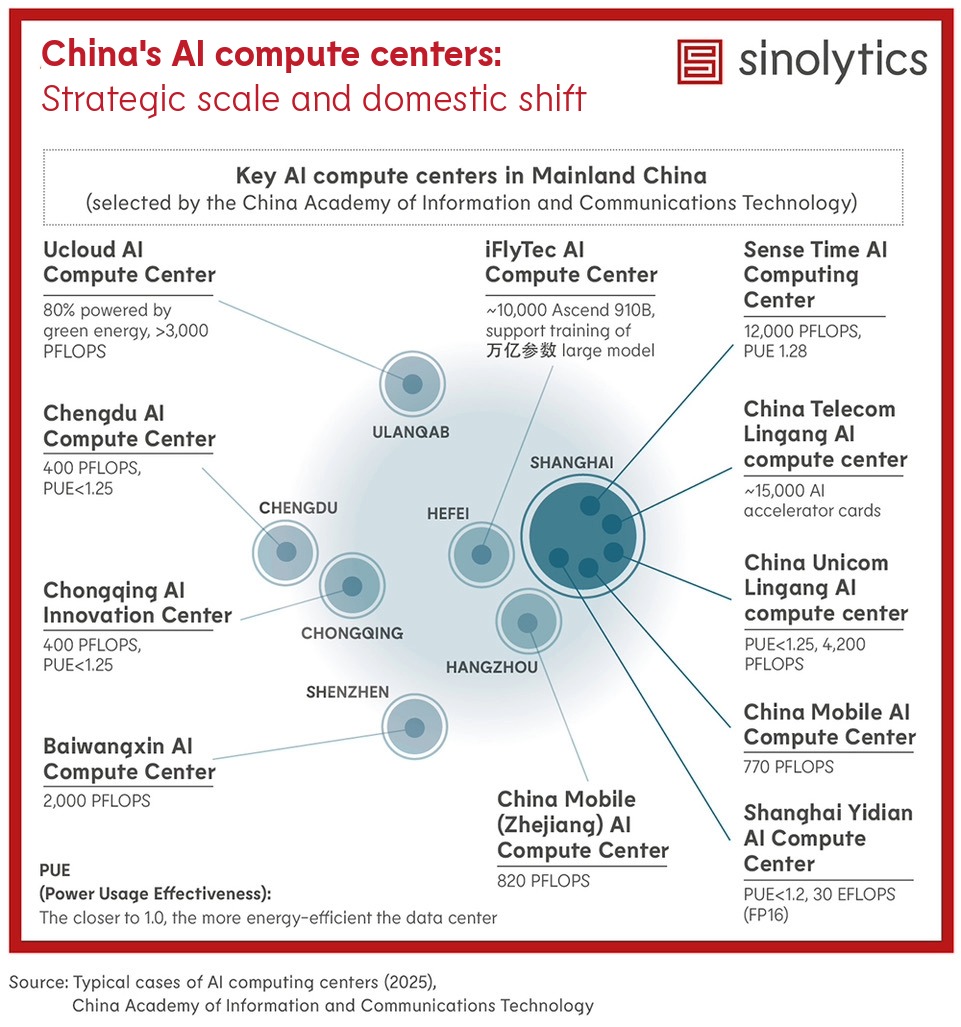

The central government has explicitly endorsed a strategy of building moderately ahead of current demand, encouraging local governments to accelerate construction through subsidies and other incentives. More than 30 cities are now developing AI compute centers, led by major technology firms and state-owned enterprises.

The most advanced facilities are concentrated in tier-one and economically dynamic cities, underscoring the connection between infrastructure investment and regional growth. Under the “East Data West Compute” initiative, cities in the western hinterland are also expanding their data-center capacity, though many of these sites still focus on general-purpose computing and storage rather than high-end AI workloads.

U.S. export controls have made it increasingly difficult for China to obtain cutting-edge GPUs, creating a major bottleneck for scaling AI compute but also spurring the growth of a domestic chip ecosystem. As local alternatives mature, the government is urging data centers to adopt them.

Provinces such as Gansu, Guizhou, and Inner Mongolia are reported to offer electricity subsidies of up to 50 percent for large facilities using domestic chips. Recently, these incentives have evolved into mandatory requirements: new guidelines stipulate that any data center receiving government support must use only domestic chips, even mandating replacement of foreign chips in ongoing projects.

This shift underscores China's strategic focus on self-reliant computing infrastructure while creating large-scale adoption opportunities for domestic chipmakers.